You open a new tab, type a complicated question into a blank text box, and hit enter.

For a fraction of a second, a cursor blinks. And then, it begins to type.

Word by word, it unfurls a perfectly structured essay, a flawless block of Python code, or a heartfelt poem about your dog. It feels deeply, unnervingly human. It feels like there is a tiny, omniscient librarian trapped inside your screen, reading your mind and speaking directly to you.

But there isn’t.

ChatGPT does not have a brain. It has no memory of its childhood, no concept of what the color blue looks like, and absolutely no understanding of the words it is typing to you. When it gives you relationship advice or explains quantum physics, it isn’t “thinking” about the answer.

So, what is actually happening in that split second between your prompt and its response?

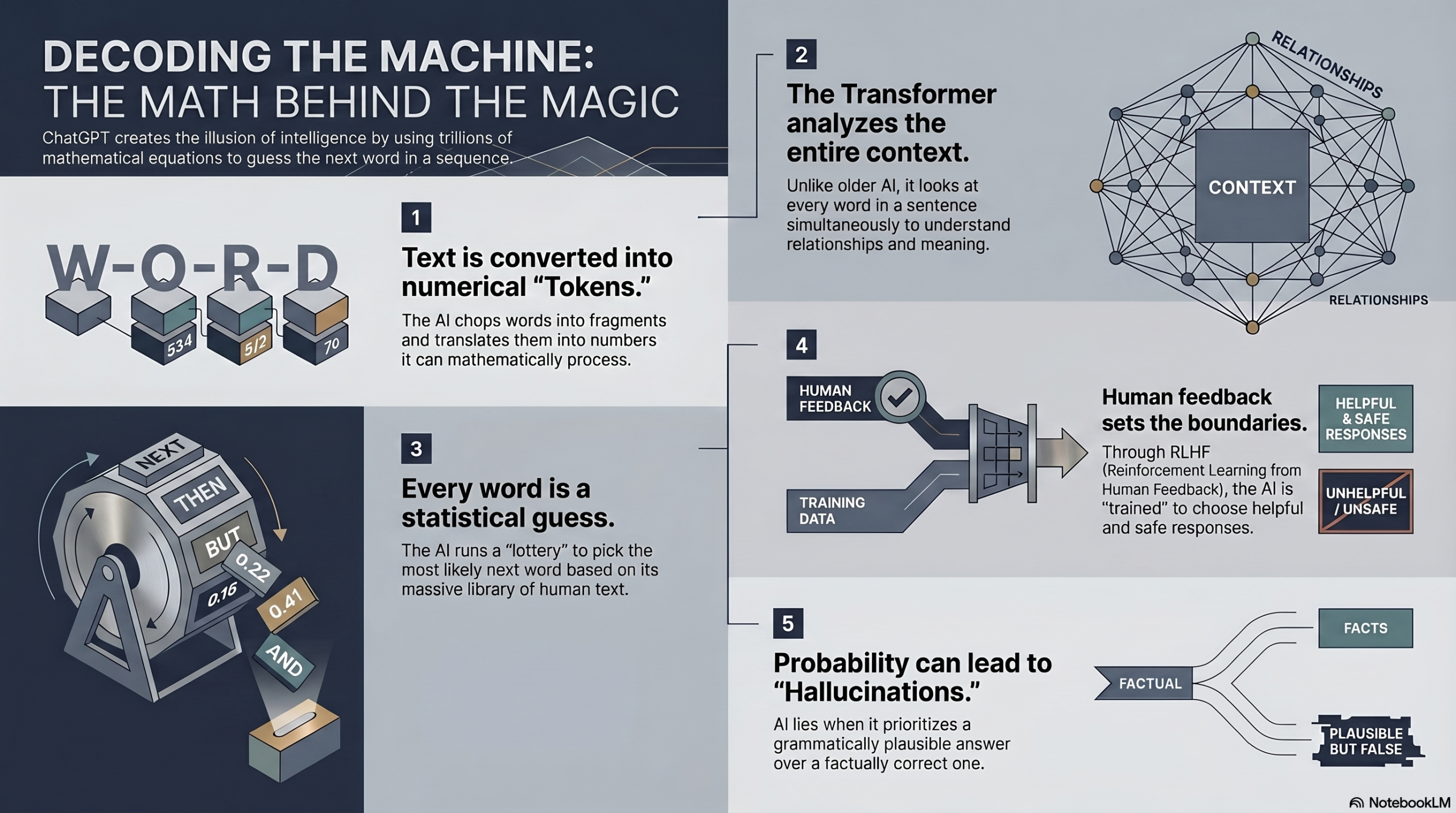

The truth is arguably more fascinating than if the machine were actually alive. ChatGPT is executing a magic trick built on trillions of mathematical equations. It is an illusion of intelligence constructed entirely out of statistics, probabilities, and an incomprehensibly massive library of human text.

Right now, millions of people are using this tool to write their resumes, diagnose software bugs, and run their businesses yet almost nobody understands what the machine is actually doing.

We are about to pull back the curtain. We will break down the invisible gears of the world’s most famous Artificial Intelligence, from the way it chops up your words, to the massive neural network that processes them, to the mathematical “guessing game” that generates its answers.

By the end of this, you won’t just know how ChatGPT works. You will understand the fundamental secret of the AI revolution.

Let’s decode the machine.

TABLE OF CONTENTS

- The Core Concept: The World’s Smartest Autocomplete

- Tokens: The Secret Alphabet of AI

- Step-by-Step: The Journey of a Single Prompt

- The Engine: How the “Transformer” Changed Everything

- Why It Learns to Be Nice: The Magic of RLHF

- The Hallucination Problem: Why AI Lies with Confidence

- Common Myths About ChatGPT Debunked

- Fascinating Facts You Didn’t Know About ChatGPT

- The Future: What Happens When AI Stops Guessing and Starts Reasoning?

- Frequently Asked Questions (FAQs)

The Core Concept: The World’s Smartest Autocomplete

To understand ChatGPT, we have to start with something you already use every day: your smartphone’s keyboard.

When you type “I am going to the…” into a text message, your phone suggests three possible next words above the keyboard: “Store”, “Park”, or “Gym”.

Your phone doesn’t know your weekend plans. It just knows that, historically, based on millions of text messages, those are the most statistically likely words to follow that specific phrase. It is playing a game of probability.

ChatGPT is just this exact same autocomplete system, but on an unimaginable, apocalyptic scale.

Instead of just looking at the last five words you typed, ChatGPT can look at the last thousands of words you typed. And instead of just being trained on your text messages, it was trained on the entire public internet: every Wikipedia article, every Reddit thread, thousands of books, scientific papers, and billions of lines of computer code.

When you ask ChatGPT a question, it doesn’t “look up” the answer in a database. It simply predicts the most statistically likely next word, and then the next word, and then the next word, until the answer is complete.

“ChatGPT is fundamentally a math engine designed to predict the next word.”

Tokens: The Secret Alphabet of AI

Here is the first hidden secret of ChatGPT: It doesn’t actually read English. Or Spanish. Or code.

Computers only understand numbers. So, before the AI can process your prompt, it has to translate your words into math. It does this by chopping your text up into pieces called Tokens.

A token isn’t always a full word. Sometimes it’s a single character; sometimes it’s a chunk of a word.

For example, the word “Hamburger” might be chopped into three tokens:

- Ham (Token ID: 4930)

- bur (Token ID: 893)

- ger (Token ID: 290)

The phrase “ChatGPT is amazing!” is converted into a string of numbers. The AI processes these numbers, does its massive mathematical probability calculations, and spits out a new string of numbers. Those numbers are then translated back into English text for you to read.

When OpenAI says a model has a “Context Window of 128,000 tokens,” it means the AI can “remember” and calculate probabilities across roughly 100,000 words at a single time, the equivalent of an entire 300-page book.

To see exactly how this mathematical prediction feels, let’s look at an interactive simulation of how an AI weighs its options for the next token.

Step-by-Step: The Journey of a Single Prompt

Let’s trace the exact, real-world journey of what happens when you type something into ChatGPT.

Imagine you type: “Why is the sky blue?”

- Step 1: Tokenization. Your sentence is instantly chopped into number-based tokens.

- Step 2: Entering the Matrix. These tokens are fed into the massive neural network (the “brain” of the AI, hosted on giant server farms).

- Step 3: Attention and Context. The AI looks at the tokens and uses its training to understand the relationships between them. It realizes “sky” and “blue” are the core subjects, and “Why” means it needs to generate an explanation, not just a description.

- Step 4: The Lottery. The AI calculates the probability of every single word in the English dictionary being the first word of the answer. It might determine the word “The” has an 80% chance of being right, and “Because” has a 15% chance.

- Step 5: The Roll of the Dice. To keep the AI from sounding like a boring robot, it has a “temperature” setting that introduces a tiny bit of randomness. It rolls a digital die and picks a highly probable word (Let’s say, “The”).

- Step 6: The Loop. It then feeds “Why is the sky blue? The” back into itself, and runs the entire multi-billion-calculation process again to guess the second word. And then the third.

It does this entire loop for every single word it types, generating text at 50 to 100 words per second.

The Engine: How the “Transformer” Changed Everything

You can’t talk about ChatGPT without talking about the “T” in its name.

GPT stands for Generative Pre-trained Transformer.

Before 2017, AI models struggled to write long paragraphs. If you asked an older AI to write a story, it would forget the main character’s name by the third paragraph. They read text strictly from left to right, one word at a time, like a human reading a book.

Then, Google researchers published a legendary paper called “Attention Is All You Need.” They invented the Transformer architecture.

A Transformer doesn’t read left to right. It looks at the entire sentence all at once. It uses a mathematical concept called “Self-Attention” to draw invisible lines of connection between words, no matter how far apart they are.

If you feed it the sentence: “The bank of the river was muddy, so I couldn’t deposit my money there,” older AIs would get confused by the word “bank.” The Transformer looks at the whole sentence, sees the word “muddy” and “river,” and mathematically weights the word “bank” to mean dirt, not a financial institution.

This mechanism is the secret sauce that gave AI the illusion of true human comprehension.

Why It Learns to Be Nice: The Magic of RLHF

If OpenAI just trained a Transformer on the raw, unfiltered internet, it would be a disaster. It would curse at you, give you instructions on how to build illegal weapons, and spout unhinged conspiracy theories (because the internet is full of that stuff).

To turn a raw, chaotic text-predictor into the polite, helpful assistant you know, scientists use a process called RLHF: Reinforcement Learning from Human Feedback.

Think of RLHF like dog training.

- The engineers gave the raw AI a prompt: “Tell me a joke.”

- The AI generated three different jokes.

- Human workers read the jokes and ranked them. “Joke A was funny. Joke B was offensive. Joke C didn’t make sense.”

- They fed these rankings back into the AI, essentially giving it a “treat” for Joke A and a “scolding” for Joke B.

By doing this millions of times, the AI learned a mathematical boundary of what humans consider “helpful, harmless, and honest.” It doesn’t know why it shouldn’t tell you how to hotwire a car; it just knows that generating those specific tokens leads to a mathematical penalty in its programming.

The Hallucination Problem: Why AI Lies with Confidence

Have you ever asked ChatGPT for a biography of a niche historical figure, and it completely made up a fake book that they wrote? Or asked it a math problem, and it confidently told you 2+2=5?

This is called an AI Hallucination. And when you understand how the AI works, hallucinations make perfect sense.

Remember: ChatGPT does not have a database of facts. It is not searching Wikipedia. It is predicting what sounds like a correct answer.

If you ask it for a quote by Abraham Lincoln, it calculates the statistical probability of words that sound like something Lincoln would say in the 1800s. Usually, its training data is so good that the “most probable” answer is also the “factually correct” answer.

But if it doesn’t have enough training data on a topic, the mathematical engine just keeps running. It pieces together a sentence that sounds grammatically perfect, highly plausible, and completely false. It is the world’s greatest BS artist, because it lacks the cognitive ability to say, “Wait, I don’t actually know this.”

Common Myths About ChatGPT Debunked

Myth 1: ChatGPT searches the internet to answer my questions.

False. While newer versions have a specific “Browse with Bing” feature they can activate, the core ChatGPT model generates answers entirely from the static “weights” (memories) frozen in its neural network during its training phase. If you turn off the browsing feature, it is completely disconnected from the live internet.

Myth 2: ChatGPT understands my emotions.

False. It recognizes the linguistic patterns of emotion. If you say you are sad, it mathematically calculates that words like “sorry,” “here for you,” and “understand” are the most appropriate responses. It feels no empathy; it is just executing excellent statistical mirroring.

Myth 3: AI is going to become conscious any day now.

False. LLMs (Large Language Models) are incredibly impressive, but they are still just text-prediction algorithms. A calculator that does calculus isn’t conscious of the math, and ChatGPT isn’t conscious of the text.

Fascinating Facts You Didn’t Know About ChatGPT

- The Cost of “Reading”: Training a massive model like GPT-4 is estimated to have cost OpenAI over $100 million in purely computational power, utilizing tens of thousands of specialized Nvidia GPUs running for months.

- The Thirsty Servers: Generating AI text requires immense computing power, which generates massive heat. It is estimated that interacting with ChatGPT for just 10 to 50 prompts “drinks” a 500ml bottle of fresh water (used to cool the data centers).

- It Doesn’t Know What It’s Saying: Because it generates text one token at a time, ChatGPT literally does not know how a sentence is going to end when it begins typing the first word. It is improvising on the fly, 100% of the time.

The Future: What Happens When AI Stops Guessing and Starts Reasoning?

The era of the “autocomplete” AI is already reaching its peak. The next frontier in artificial intelligence is moving beyond just guessing the next word, and teaching the machine to actually reason.

Companies like OpenAI are developing models (like the “o1” series) that use “Chain of Thought” prompting. Instead of instantly blurting out the most statistically likely answer, the AI is trained to stop, break the problem down into steps, test its own logic, erase its mistakes in a hidden sandbox, and then give you the final output.

We are moving from AI that talks fast, to AI that thinks slow.

As these systems gain the ability to interact with the real world controlling your web browser, booking flights, and running code, the invisible math engine behind your screen is going to step out of the chatbox and into our daily reality.

FREQUENTLY ASKED QUESTIONS (FAQs)

1. Does ChatGPT save my conversations?

Yes. By default, OpenAI saves your conversations to train future versions of the model. You can opt out of this by turning off “Chat History & Training” in your settings.

2. Can ChatGPT detect if I use it to cheat on an essay?

Not reliably. AI detection tools look for low “perplexity” (highly predictable text patterns), but they frequently flag human-written text as AI, and miss heavily edited AI text. No detector is 100% accurate.

3. What does “GPT” stand for?

It stands for Generative Pre-trained Transformer. “Generative” means it creates text, “Pre-trained” means it studied the internet before you talked to it, and “Transformer” is the specific neural network architecture it uses.

4. Why is ChatGPT sometimes slow to type?

It requires massive server power. If millions of people are using it at the exact same time, the servers (GPUs) have to queue up the complex mathematical calculations for everyone’s next token, which causes lag.

5. How much data was ChatGPT trained on?

While exact numbers are closely guarded, GPT-4 is estimated to have been trained on roughly 10 trillion “words” (tokens), comprising a significant percentage of the high-quality, publicly accessible internet.

6. Can ChatGPT “think” when I am not talking to it?

No. It is essentially frozen in a dormant state. It requires a prompt (an input of data) to trigger the mathematical calculations that generate an output. It does not daydream or ponder while idle.

7. Why is it so bad at drawing hands or doing complex math?

Text-based LLMs are bad at math because they don’t calculate math; they predict the next number based on text patterns. For images, AI image generators struggle with hands because hands look drastically different from every angle in training data, confusing the pattern-matching system.

8. Is ChatGPT biased?

Yes. Because it was trained on human text from the internet, it absorbed all the historical biases, stereotypes, and cultural slants present in that data. OpenAI attempts to “align” it to be neutral via RLHF, but biases still bleed through.

9. Will ChatGPT replace programmers?

Currently, it acts more like a highly advanced “co-pilot.” It can write standard boilerplate code and debug errors brilliantly, but it lacks the architectural reasoning to independently build and deploy massive, complex software systems from scratch.

10. Can I run ChatGPT on my own computer without the internet?

You cannot run ChatGPT itself, as it requires massive data centers. However, you can run smaller, open-source models (like Meta’s Llama) locally on a powerful consumer laptop without an internet connection!

INTERNAL LINKING SUGGESTIONS

To keep exploring the hidden technology of the modern world, check out these related guides:

- How Do Mobile Towers Work? The Science of Cellular Networks

- How Does Bluetooth Work? The Ultimate Guide to Wireless Tech

CONCLUSION

The next time you hit “Enter” on ChatGPT and watch the words effortlessly flow across your screen, you will no longer be fooled by the magic trick.

You will know that there is no ghost in the machine. There is no omniscient mind. There is only a dizzying storm of mathematics trillions of connections lighting up in the dark, chopping your words into numbers, calculating probabilities, and rolling the dice on the English language thousands of times a second.

We have successfully taught rocks to do math, and we have taught that math to speak.

ChatGPT might not truly understand the poetry it writes or the code it generates, but that doesn’t make the technology any less profoundly beautiful. It is a mirror reflecting the entire collected knowledge, history, and language of humanity, distilled into a single, blinking cursor, just waiting to guess your next word.

Comment