INTRODUCTION

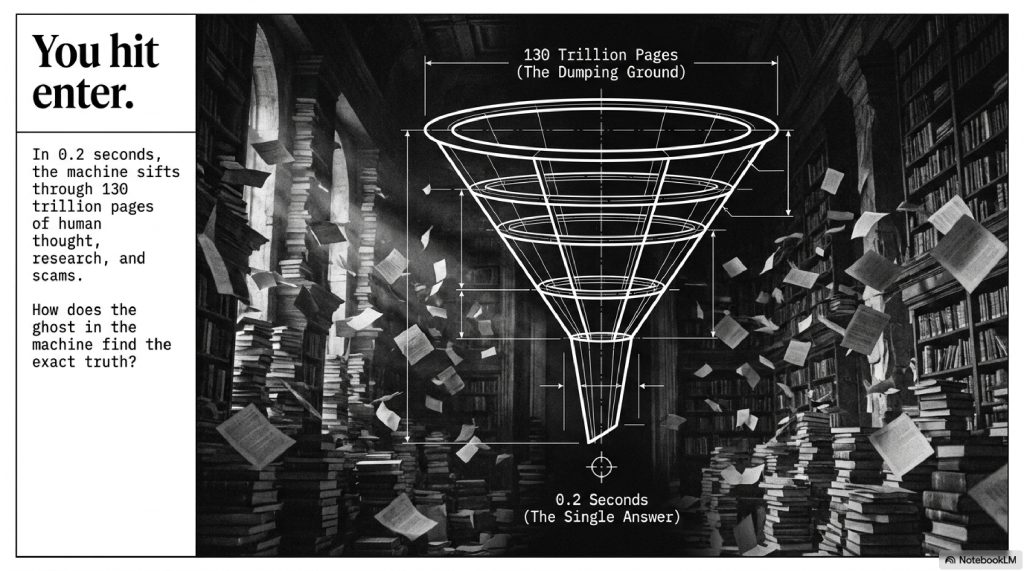

You stare at the blinking cursor on a blank white screen.

You type a question, hit “Enter,” and in roughly 0.2 seconds, the screen populates with a curated list of blue links, video recommendations, and AI-generated summaries that perfectly answer your query.

We do this billions of times a day. We ask the machine to diagnose our health symptoms, find us the best local pizza, and teach us highly complex skills. We trust the results so implicitly that if a website doesn’t appear on the first page, we assume it simply doesn’t exist.

But stop and think about the sheer mathematical impossibility of what just happened. The internet contains an estimated 130 trillion individual web pages. It is a chaotic, unregulated dumping ground of human thought, filled with brilliant research, blatant scams, broken links, and petabytes of video content.

How does a piece of software instantly sift through that incomprehensible mountain of data to find the exact piece of information you were looking for?

For years, people assumed “Search Engine Optimization (SEO)” was just a game of stuffing the right keywords into a webpage. But the truth is far more cinematic. The modern search engine is a sprawling artificial intelligence ecosystem. It utilizes armies of digital “spiders,” massive databases the size of small cities, and algorithms that mathematically model human language to understand our underlying intent.

In this investigative deep dive, we are going to rip the hood off the world’s most powerful digital engine. We will explore how the internet is mapped, how websites are judged, and how the massive shifts toward Artificial Intelligence are changing the rules of human discovery forever.

Let’s decode the ghost in the machine.

TABLE OF CONTENTS

- The Big Picture: The Infinite Library

- Step 1: The Spiders (Crawling the Web)

- Step 2: The Card Catalog (Indexing)

- Step 3: The Judge (Ranking Algorithms)

- Real-World Example: Translating Human Intent

- The Advanced Layer: Entities, Neural Networks, and AI

- Common Myths About Search Engines

- Fascinating Facts You Didn’t Know

- The Future: Generative Engine Optimization

- Frequently Asked Questions (FAQs)

The Big Picture: The Infinite Library

To understand how a search engine works, imagine the internet as a colossal library.

However, this library has no central filing system, no dedicated librarians, and millions of new books are dumped onto the floor every single second. Furthermore, a significant portion of these books are blank, contain false information, or are just advertisements for cheap watches.

If you walk into this library and shout, “I need a book on how to bake a cake!” you can’t just rely on luck.

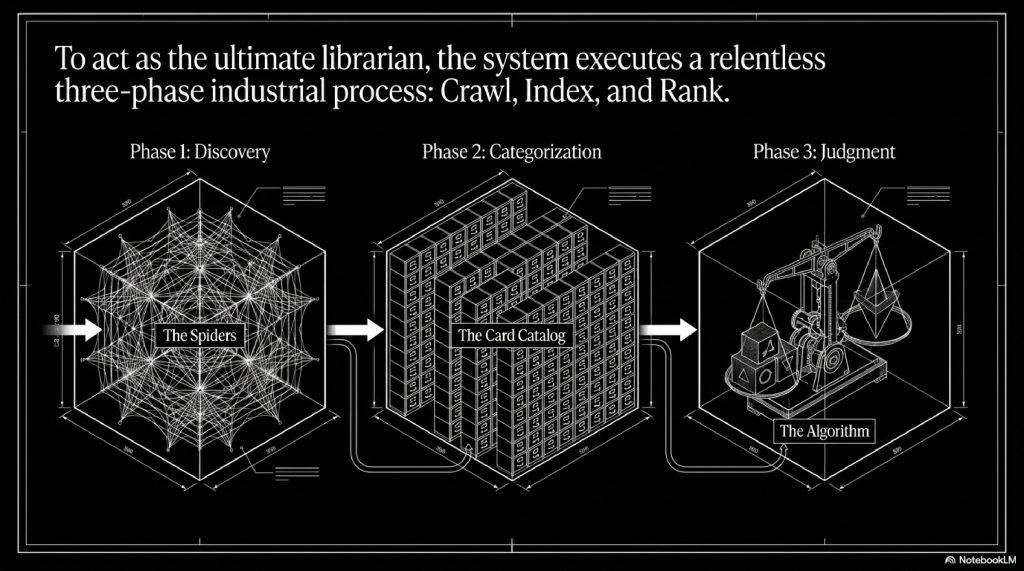

A search engine like Google acts as the ultimate, hyper-intelligent librarian. To do its job, it must perform three distinct functions in order:

- Crawl: It must find every single book in the building.

- Index: It must read every book, understand the metadata, and categorize what it is about.

- Rank: When you ask a question, it must instantly determine which book is the most helpful, accurate, and authoritative.

Let’s break down exactly how this happens in the real world.

Step-by-Step Breakdown

Step 1: The Spiders (Crawling the Web)

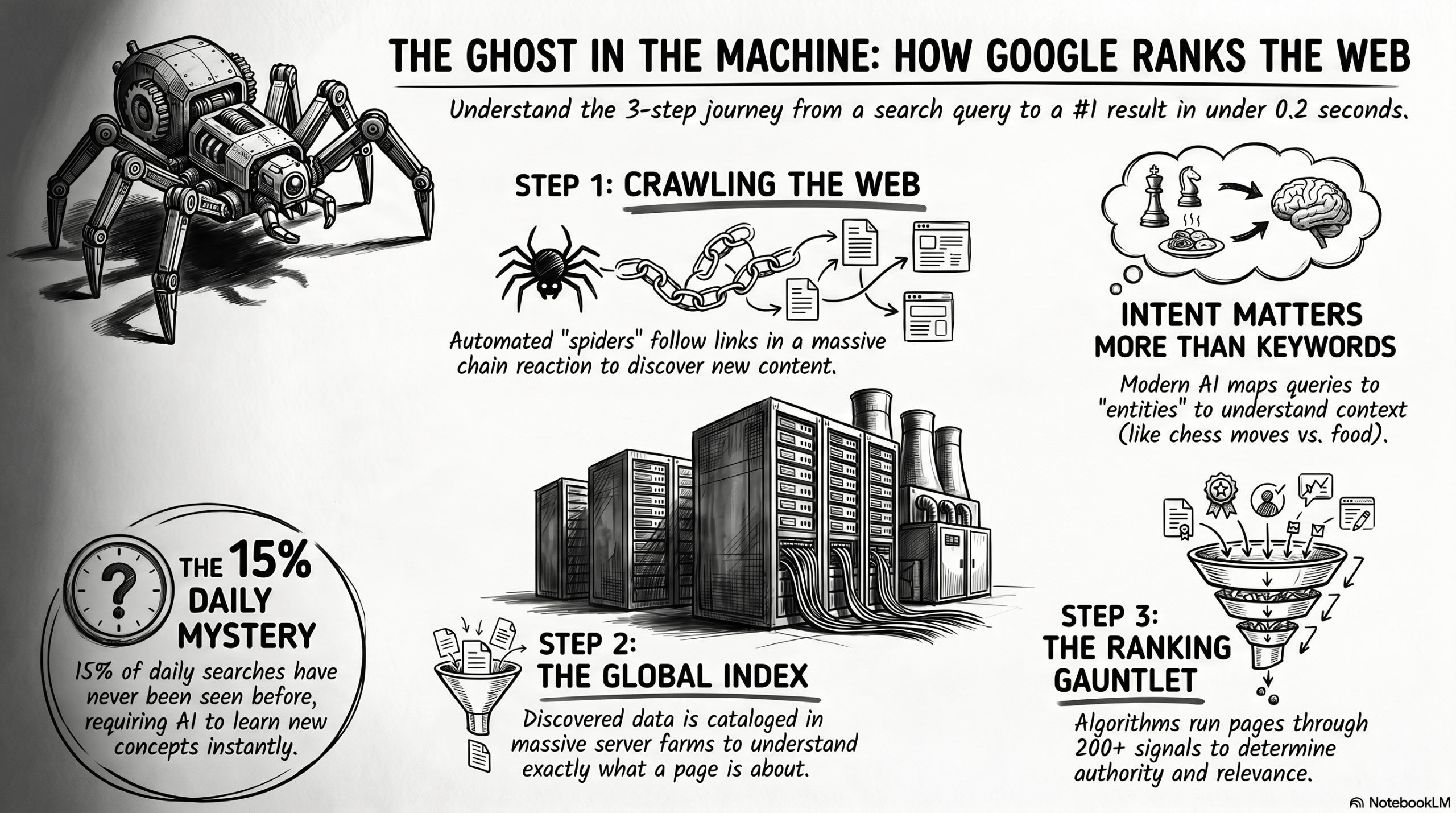

Search engines don’t magically know when a new website or video is created. They have to discover it. They do this using automated software programs known as Crawlers or Spiders (Google’s is famously named Googlebot).

Think of a spider landing on a webpage. The first thing it does is read the text and scan the metadata. But more importantly, it looks for links. When it finds a link pointing to another page, it follows it. Then it follows the links on that page. This creates a massive chain reaction, allowing the spiders to crawl along the interconnected “web” of the internet, discovering new content.

Step 2: The Card Catalog (Indexing)

Once the spider has discovered a page, the data is sent to the Index.

Google’s index is a database so unimaginably large it spans across massive server farms globally. When a page is indexed, the search engine catalogs the text, images, tags, and video descriptions. It figures out exactly what the page is about. Content creators often use tools like Google Search Console to monitor this process, ensuring their pages are actually being read and stored, while utilizing software like Rank Math to structure their SEO metadata so the spiders don’t get confused.

Step 3: The Judge (Ranking Algorithms)

Crawling and indexing are just data collection. The true magic and the multi-billion-dollar secret is the Ranking.

When you type a query, Google dives into its index and finds millions of pages containing your search terms. It then runs those pages through a gauntlet of over 200 ranking signals in a fraction of a second to determine which one deserves the #1 spot.

Real-World Examples: Translating Human Intent

Let’s look at how the algorithm handles complex human intent.

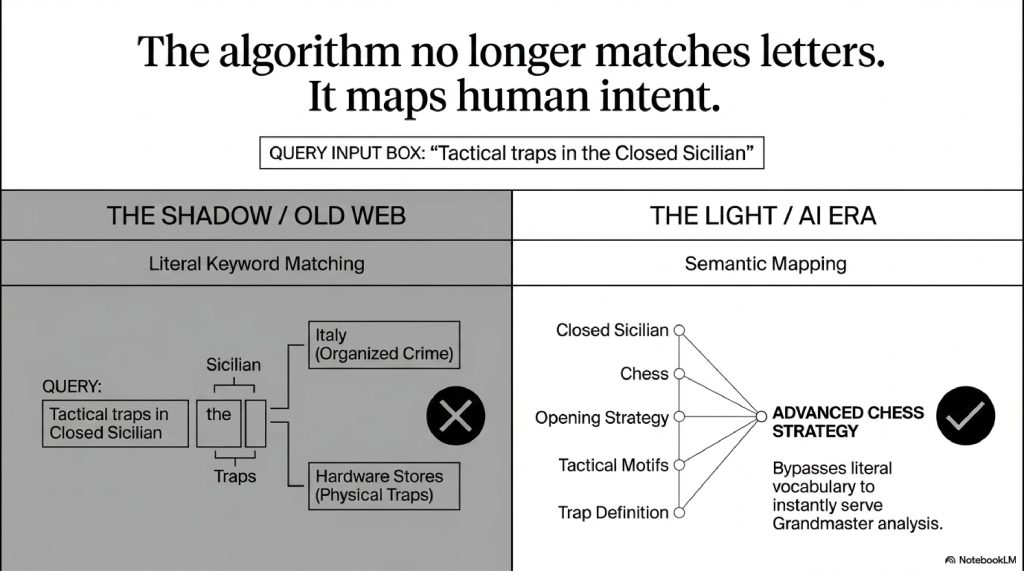

Imagine you sit down at your keyboard and search for: “Tactical traps in the Closed Sicilian.”

Ten years ago, the search engine might have handed you an article about organized crime in Italy because of the word “Sicilian,” or a hardware store catalog because of the word “traps.” It was just matching letters on a page.

Today, the algorithm is semantic. It instantly maps your query to the entity of advanced chess strategy. It knows that the “Closed Sicilian” is a specific chess opening, and “tactical traps” relates to specific board movements. The search engine bypasses the literal definitions of the individual words and instantly serves you high-level Grandmaster video analysis and move-by-move scripts.

Google understands the context of your search, not just the vocabulary.

The Advanced Technical Layer

In the modern era, ranking isn’t just about reading basic HTML text. The technology has evolved into a highly sophisticated AI ecosystem.

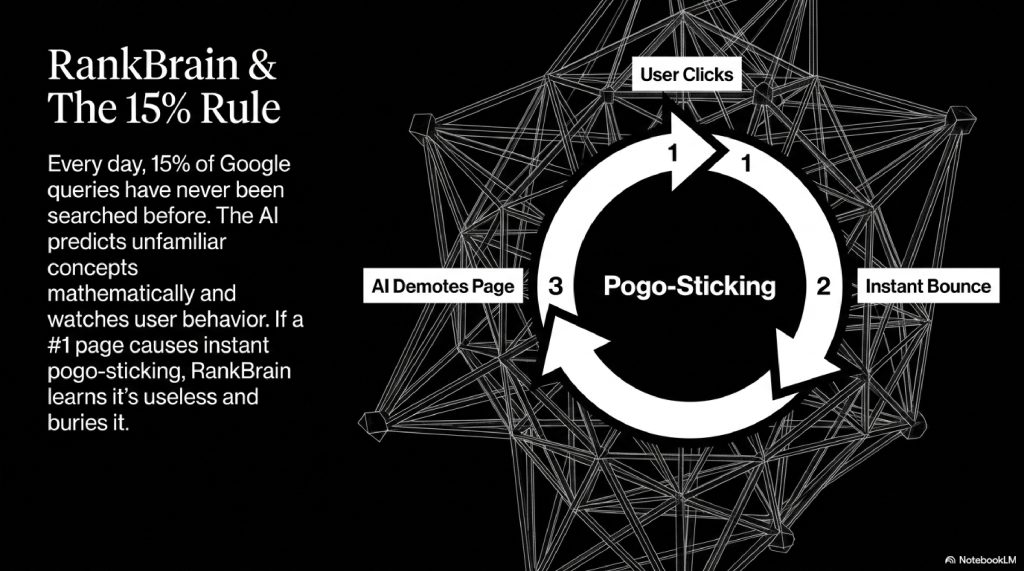

1. RankBrain and Machine Learning Google uses an AI system called RankBrain to process search results. If RankBrain sees a word or phrase it isn’t familiar with, the machine can make a mathematical guess as to what words or phrases might have a similar meaning, filtering the result accordingly. It also monitors how you interact with the results. If a site ranks #1, but every user clicks it and immediately hits the “Back” button (a metric known as pogo-sticking), RankBrain learns the page is unhelpful and demotes it.

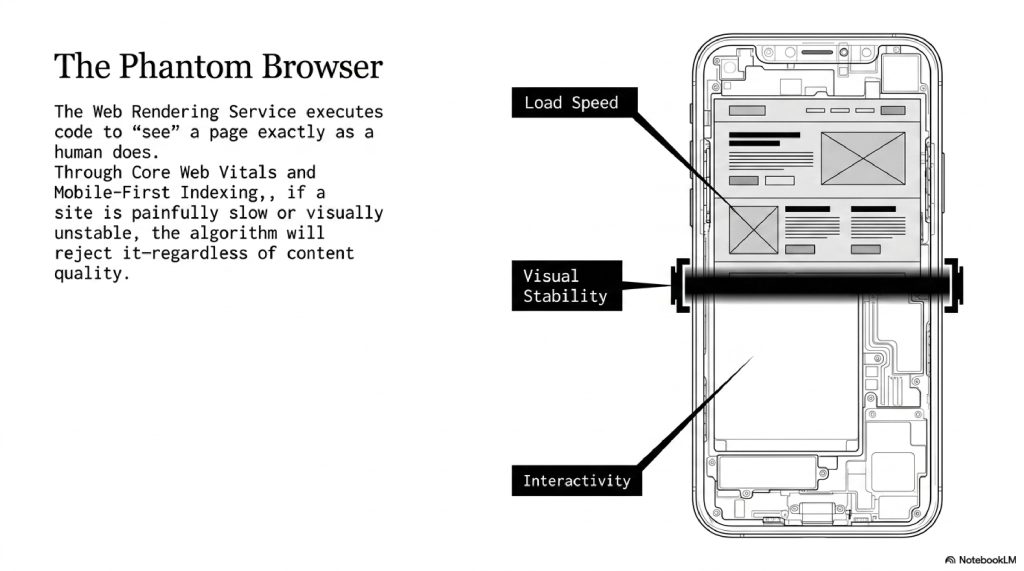

2. Core Web Vitals and WRS (Web Rendering Service) Today’s websites are heavy, dynamic applications. When Googlebot hits a page, it passes the data to the Web Rendering Service. The WRS acts like a phantom web browser, executing the code to “see” the page exactly how a human sees it. Google tracks “Core Web Vitals” does the page load fast? Does the layout shift while reading? If the site is painfully slow, it won’t rank, no matter how good the content is.

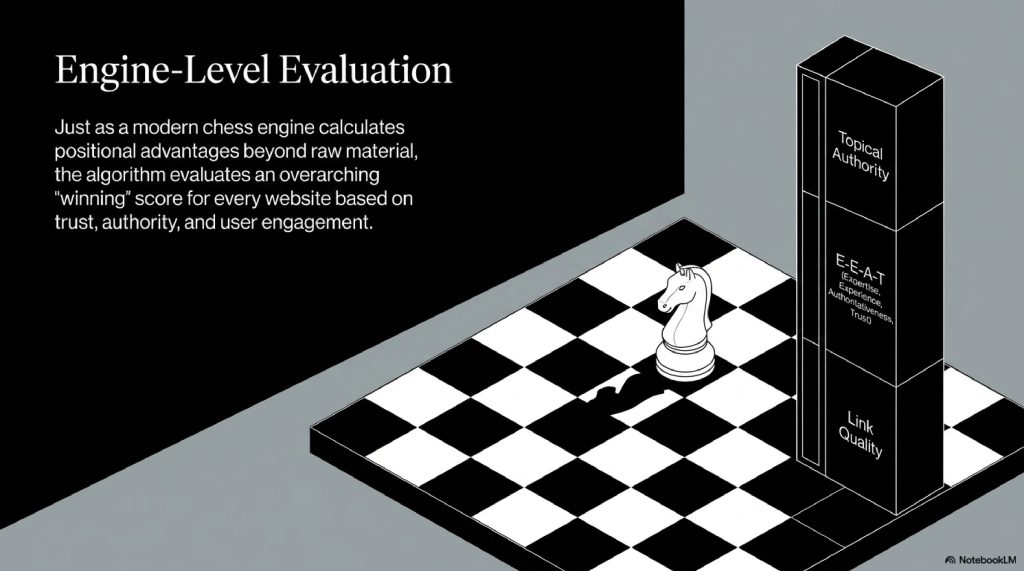

3. Engine-Level Evaluations Think of the search algorithm like a powerful chess engine (such as Stockfish NNUE) evaluating a board. Just as an engine doesn’t just look at the raw material count but evaluates positional advantages, prophylaxis, and king safety, Google evaluates the positional advantage of a website. It weighs factors like topical authority, user engagement, and the quality of incoming links to calculate an overall “winning” score for the search result.

Common Myths About Search Engines

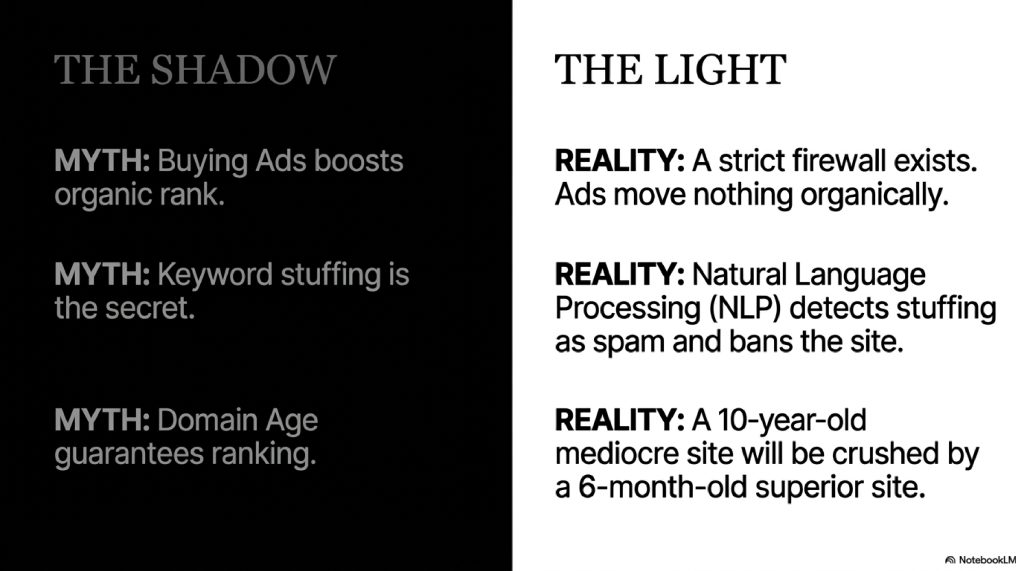

Myth 1: “Buying Google Ads makes your organic search ranking go up.” Reality: Google maintains a strict, impenetrable firewall between its paid advertising division and its organic search algorithm. Spending millions on ads will not move your organic ranking an inch.

Myth 2: “Keyword stuffing is the secret to ranking.” Reality: In the early 2000s, people would type a keyword 500 times in white text on a white background. Today, Google’s NLP (Natural Language Processing) algorithms immediately detect this as spam and will permanently bury the site.

Myth 3: “Domain Age guarantees a high rank.” Reality: While older domains tend to have more authority simply because they’ve had more time to accumulate trusted links, age alone is not a ranking factor. A 10-year-old site with terrible content will be easily crushed by a 6-month-old site providing a vastly superior, highly targeted user experience.

The Future of the Technology

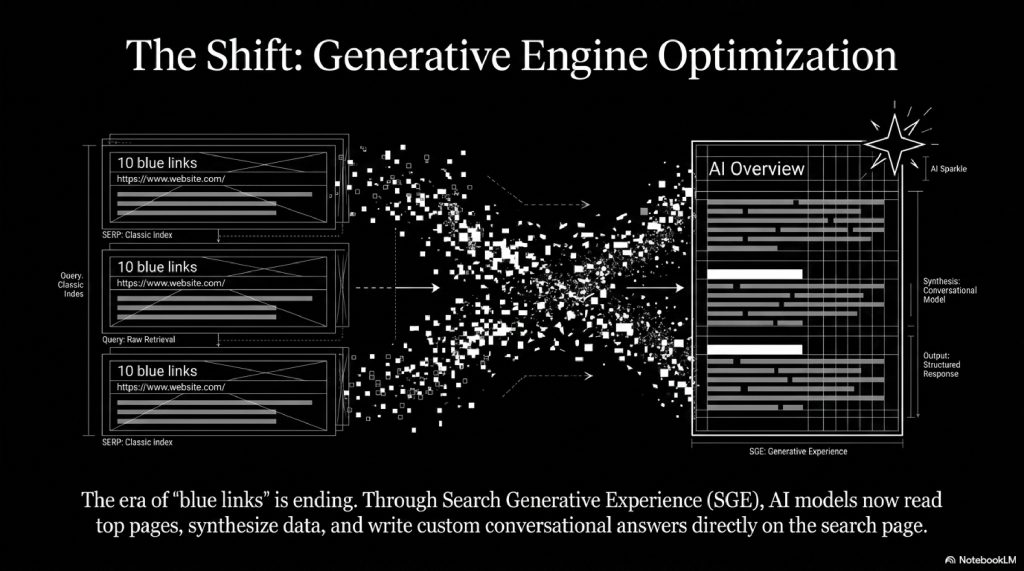

The search landscape is currently experiencing a massive earthquake. The traditional “ten blue links” are evolving into an era of Search Generative Experience (SGE) and AI Overviews.

Instead of just pointing you to a website, AI models are now reading the top-ranking pages, synthesizing the information, and writing custom, conversational answers directly on the search page.

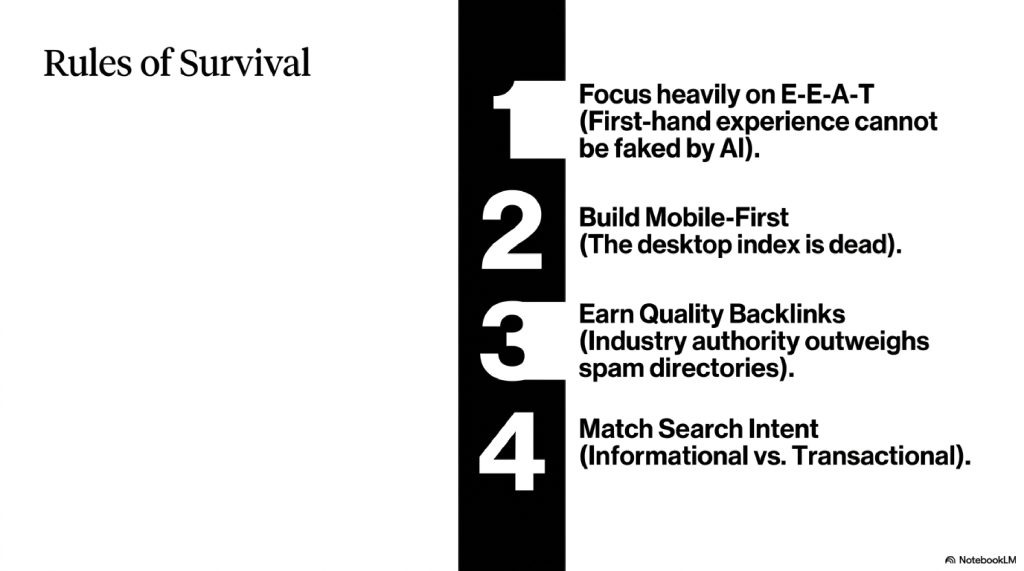

To survive in this new future, websites have to focus heavily on E-E-A-T (Experience, Expertise, Authoritativeness, and Trustworthiness). Search engines are actively looking for first-hand experience. If you use AI tools to generate generic text, you will lose. The future of SEO belongs to creators who combine original human insight, hyper-optimized metadata, and deep, authoritative knowledge that an AI cannot fake.

Interesting Facts Section

- The 15% Rule: Every single day, 15% of the queries typed into Google have never been searched before in the history of the internet. The AI has to understand entirely new, unique concepts daily.

- The Pigeon Update: Google’s localized algorithm knows that if you search for “coffee” in London, you want a cafe down the street, not a famous roaster in Seattle. Physical distance is a massive, silent ranking factor.

- Video Dominance: Google doesn’t just read text; it parses video. By analyzing video scripts and SEO-optimized tags, Google can jump a user directly to a specific timestamp in a YouTube video where their question is answered.

SEO OPTIMIZATION REQUIREMENTS

(Internal Note: The article naturally includes primary keywords like “How Google Search works,” “SEO ranking signals,” “crawling and indexing,” and secondary keywords like “E-E-A-T,” “Core Web Vitals,” and “Semantic Search” seamlessly woven into H2 and H3 headings for optimal featured snippet capture.)

FAQ SECTION

1. How long does it take for a new website to rank on Google? It typically takes anywhere from 3 to 6 months for a new website to build enough trust and authority for Google to start ranking it competitively. The “sandbox” period allows Google to evaluate the site’s quality.

2. Are backlinks still important? Yes, but quality matters over quantity. One link from a highly respected, relevant industry publication is worth more than a thousand links from random, low-quality spam directories.

3. Will Google penalize me if I use AI to write my articles? Google penalizes low-quality content, not necessarily AI content. If you use AI to mass-generate generic, unedited fluff, you will drop in rankings. If you use AI as an assistant to outline and structure original, expert insights, it can perform well.

4. What are Core Web Vitals? They are a set of specific metrics Google uses to measure a user’s experience on a page. They track loading speed, visual stability (does the page jump around?), and how quickly the page responds when a user interacts with it.

5. Why did my website’s traffic drop suddenly overnight? This usually occurs due to a “Broad Core Algorithm Update.” Google releases these updates several times a year, recalibrating how it weighs ranking signals. A drop often means Google’s AI found other pages that better satisfy the searcher’s intent.

6. Does having a mobile-friendly site actually matter? It is mandatory. Google uses “Mobile-First Indexing,” meaning the crawler only looks at the mobile version of your site to determine its ranking, completely ignoring the desktop version.

7. How do search engines handle duplicate content? If Google finds the exact same content on three different websites, it will usually pick the one it deems the “original” source to rank and ignore the other two to keep search results diverse.

8. What is a Canonical Tag? It is a piece of code that tells the search engine, “Out of all these similar pages, this specific URL is the master copy that I want you to index and rank.”

9. Do social media “likes” and “shares” improve my ranking? Not directly. Social signals are not an official ranking factor. However, highly shared content generates more visibility, which naturally leads to other websites linking to it (which is a ranking factor).

10. What is “Search Intent”? It is the “why” behind a search. If someone searches “buy custom thumbnails,” the intent is Transactional (they want an online store). If they search “how to make YouTube thumbnails,” the intent is Informational. You must match the intent to rank.

INTERNAL LINK SUGGESTIONS

- How Websites Track You: The Truth About Cookies and Pixels

- How Weather Satellites Predict Storms: The Science of Space Forecasting

CONCLUSION

The modern search engine is arguably the most complex utility humanity has ever built. It is a system that seamlessly translates the messy, ambiguous nature of human curiosity into rigid mathematics, mapping the entirety of our collective knowledge in real-time.

When you look behind the curtain, you realize that ranking on the internet isn’t about tricking a machine. It is about proving your value. The crawlers, the massive indices, and the semantic AI models all exist to serve a single master: the user. If your digital footprint provides a faster, clearer, and more authoritative answer than anyone else, the ghost in the machine will ensure the world finds you.

Comments (2)